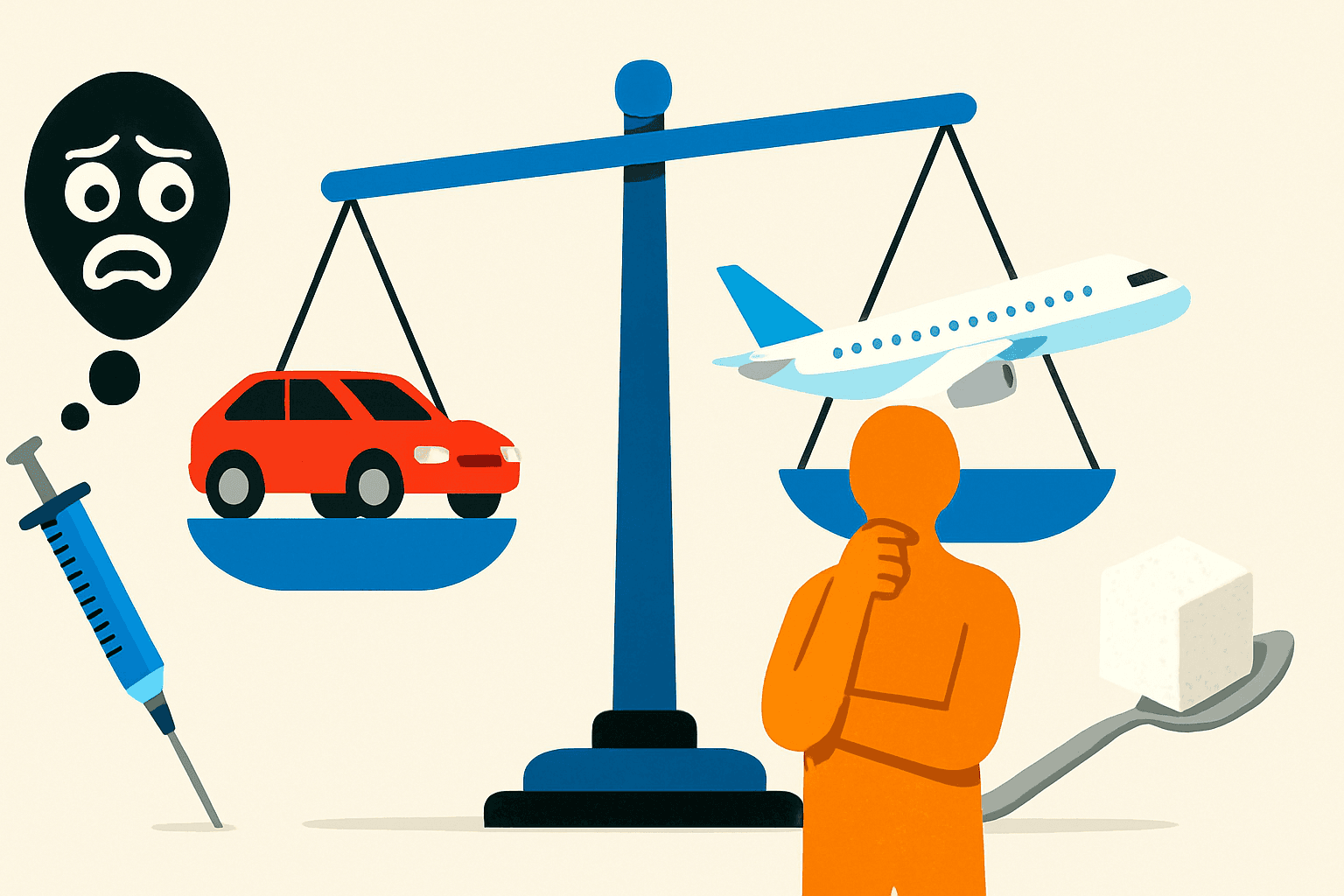

In the United States, the September 11 attacks killed approximately 3,000 people. In the year following, an estimated 1,500 additional Americans died in car accidents because they switched from flying to driving, driving being roughly 65 times more dangerous per mile than commercial aviation. The fear of terrorism generated by those attacks caused, directly and measurably, more road deaths in one year than the total passenger fatalities on US commercial flights in the preceding decade. The people who died in those car accidents were not killed by terrorists. They were killed by the gap between perceived and actual risk, operating through ordinary human decision-making at scale.

This is not an isolated case. It is a demonstration of a systematic feature of human cognition that kills people regularly, usually in less dramatic and therefore less documented ways.

How Risk Assessment Is Supposed to Work

The rational model of risk assessment is simple in principle: multiply the probability of an event by its magnitude, compare that figure across available options, and choose the option with the lowest expected harm. This works reasonably well for known risks with stable probabilities and measurable outcomes. It works very badly for the kinds of risks humans actually encounter, emotionally charged, vividly imaginable, socially loaded, and embedded in environments shaped by other people's choices about what information to provide.

The machinery we actually use for risk assessment was not built for the world we currently inhabit. It was built for the ancestral environment in which risks were immediate, visible, physical, and social. A large predator, a violent rival, a contaminated water source, rejection from the group, these were the threats our cognitive systems were calibrated to detect. They are all vivid, present, and rapidly actionable. Evolution rewarded fast, strong responses to these threats. It did not reward careful probabilistic reasoning about slow-moving, invisible, or statistically complex risks, because those weren't the threats that killed your ancestors before they could reproduce.

The Heuristics That Fail Us

Several well-documented cognitive heuristics produce systematic risk miscalibration. The availability heuristic leads us to judge the likelihood of events by how easily they come to mind, which is correlated with how recently or vividly they've been reported, not with how common they actually are. Plane crashes are memorable, dramatic, and heavily reported; car accidents are ordinary. The availability of each shapes perceived risk in the opposite direction from actual risk.

Dread risk, the amplification of any risk that involves loss of control, catastrophic potential, involuntary exposure, and unknown mechanisms, produces responses disproportionate to the actual magnitude. Nuclear power generation, despite a safety record substantially better than coal, natural gas, or oil per unit of energy produced, triggers dread responses that have resulted in its being abandoned in favour of fossil fuels with far worse death rates. We replaced a safer technology with a more dangerous one because one of them feels dangerous and the other doesn't.

Scope insensitivity means we don't scale our concern proportionately with magnitude. Studies have shown that people are willing to contribute roughly the same amount to save 2,000 birds from an oil spill as to save 200,000, the scope of the harm barely affects the emotional response. This produces policy environments where vivid, specific, small-scale harms attract enormous resources while vast diffuse harms with the same or greater total impact are largely ignored.

The problem is not that people are stupid. It's that the cognitive tools available are approximately 200,000 years old, and the risk environment changed very recently. The mismatch is predictable, systematic, and causes substantial preventable harm.

Why It's Hard to Fix

Understanding the biases helps somewhat, studies show that people who know about the availability heuristic make marginally better risk judgements. But knowing about a bias and correcting for it are different things. The emotional systems that generate risk responses operate fast and automatically; the deliberate reasoning that might correct them is slow and effortful and runs on limited cognitive resources. Correcting for availability bias requires noticing that you're using it, recalling relevant base rates, doing actual mental arithmetic, and overriding the emotional response, all under conditions where you're often busy, distracted, or not particularly motivated to do the cognitive work.

The policy implication is that improving individual risk literacy, while worthwhile, is insufficient. Environments and institutions need to be designed to compensate for the biases rather than exploit them, which is the opposite of what media systems, political incentives, and commercial interests typically do.

We are exactly as bad at risk assessment as you'd expect a species to be that evolved its risk-detection systems in a completely different world from the one it now inhabits.

Disagree? Say so.

Genuine pushback is welcome. Personal abuse is not.